Chip product reviews

Consumer in their buying process are overwhelmed by the amount of products they have to choose from. Often not knowing what is the right product for their purpose they seek out for trustworthy reviews frequently.

With its unique test center and editorial independence Chip offers high-quality reviews that users can trust.

The design of the reviews wasn't matching the high ambitions of Chip anymore. A cluttered page, information architecture that wasn't fitting to the user needs and a hidden USP were only a few drawbacks that we noticed during ongoing user studies. It was time for a redesign.

My role

For this project I had the chance to contribute to every step of the UX process.

During the research phase I planned, conducted and analyzed stakeholder interviews and did at-the-desk-research by summarizing existing research findings. In a first user study I validated previous findings by testing the status quo once again.

In the design phase I created wireframes and developed HTML prototypes as well as conducted user studies to test the prototypes. To integrate stakeholders into the design process and get their buy-in I communicated results to them on a regular basis.

Stakeholder interviews

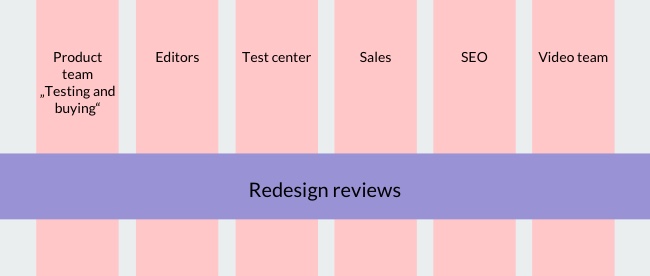

Redesigning the reviews involved several different teams that were distributed over the vertical organizational structure of Chip. It was therefore crucial to get buy-in of all stakeholder and understand their needs and requirements from the beginning of the project.

I planned and conducted stakeholder interviews with representatives from the teams of editors, test center, sales, seo and video.

The team of editors would work on the redesigned reviews on a daily basis. That's why I took specially care about their needs and requirements. The interviews were based around following questions:

- For which categories do you write reviews?

- What is your process when writing a review? With who do you collaborate? Are there any guidelines?

- What goal do you have in mind when writing a review?

- For which target audience do you write?

- What makes a review a good one? What does your review need so that you walk home happy?

- What makes a Chip review unique? What are the things that we do better than the competition?

- What are the things that we can optimized when redesigning? Why are those things like that now?

- What competitors do a better job than we do?

- Is there anything that you would like to know from our readers?

- Is there anything special the we should take care of when doing user studies?

- What are your expectations from the redesign, is there something you worry about?

User research

For the mobile-only m.chip page and both iOS and Android app, there were already results from user research about product reviews. I started by analyzing the existing results.

Based on the previous findings and the results of the stakeholder interviews I planned and conducted a user study about the current reviews. The purpose was to find out if and how much the existing findings and the views of the stakeholder fit to the status quo.

Five persons, who fit to our personas, participated in the study. Stakeholders were invited to join the sessions as a note taker or observe them in a remote observer room to which the sessions were streamed.

What I found out

- Pro's, con's and summary support users most in their decision.

- Uncertainty of decision rises the more users read towards the end.

- Alternatives helpful in general, but there is a possible endless loop.

- Users expect more and bigger images of the products; image gallery on a separate page is not consider as helpful.

- Test data: rating categories are interesting but little prominent, labels of ratings not always self explaining, percentage not helpful when asking "what is good for me".

- Shop offers are on the edge between helpful price information and intrusive ad.

- Aside-element on the right is considered as useless information, because most of the information is out of the product's context. It also distracts some users from their goal.

Agreeing on a shared goal

After summarizing the results of both stakeholder interviews and research findings I teamed up with my project manager Marian Löffler. We identified the key points where user needs and stakeholder opinions already match and where there might still be misalignments.

My project manager set up a meeting with the purpose to get the agreement of all participating stakeholders on a shared goal. She prepared a presentation to which I contributed with aggregated research findings, quotes of users and video snippets that I collected during user studies.

We agreed on the following goals for the reviews

- Show editorial independence and objectivity of Chip

- Transport the high quality work of the test center

- Give a quick overview over the product

- Give a clear buying recommendation

- Show the values of the new Chip design: Clear, proficient, progressive

Prototypes

It was crucial for me to get early feedback during the design process from both stakeholders and users. Wireframes and responsive HTML prototypes helped me to achieve that.

Early wireframe

From previous research and the first user study in the context of redesigning the reviews I learned that user found the content of the aside-element on the right not helpful in supporting their buying decision. Also, some of them got distracted. In an early wireframe I tried to dissolve the aside-element from the right side. I did that by reducing the amount of content, pick elements that support the decision process of users and integrate it in the review itself.

Responsive HTML prototype

Based on the findings from user research and stakeholder interviews I created a responsive prototype. Our redesign team offered a styleguide with reusable components in HTML and Css that made it incredibly easy and fast for me to develop prototypes. Built upon the framework Foundation I was able to design a mobile-first prototype that already defines responsive behavior over all three breakpoints mobile, tablet and desktop.

Learnings from user studies

I tested the responsive HTML prototypes in two successive user studies. Five users participated in the first study that focused on the desktop view. Eight users participated in the second study, with four users testing the mobile view and four testing the desktop view.

Content

Having dissolved the aside-element on the right I noticed, that users focused much more on the content itself. There wasn't anything to be distracted from. It became clear, that the text has high impact on how users perceive a review. For example, colorful descriptions attract users without much technical pre-knowledge a lot.

The length of the text implies competence. Users mentioned that - even if they didn't read the text in its full length - they would have missed the text if it wasn't there.

The tested reviews had the exact same text length as the reviews in the old design. I noticed that users perceived the new reviews to be longer, they even thought that the tester put greater effort into testing the product.

Fact box

Price widget

Having placed the price widget directly below the grey fact box, the review seemed to be finished to some users. They stopped scrolling around that area, thinking that no more content will be there.

Few users had mixed feeling about the price widget. They perceived it as advertisement and feared that the independence of Chip might suffer. To our very surprise, most of the users said that the price information is useful for them. It helps to get an idea about the real price they will have to pay.